Google is getting smarter, and it’s driven by advances in natural language processing.

Today, Google announced an update to search, made possible by state-of-the-art natural language processing advances. Particularly in the pre-training of contextual representations.

“BERT AI is special, because it is the first deeply bidirectional, unsupervised language representation, plainly trained using only a plain text corpus.” – Source

What Google has essentially done, is apply BERT to another text corpus – the content it scraped from your web pages.

This means that Google is now able to better match your query to the content you wrote. Not just a little better – much better.

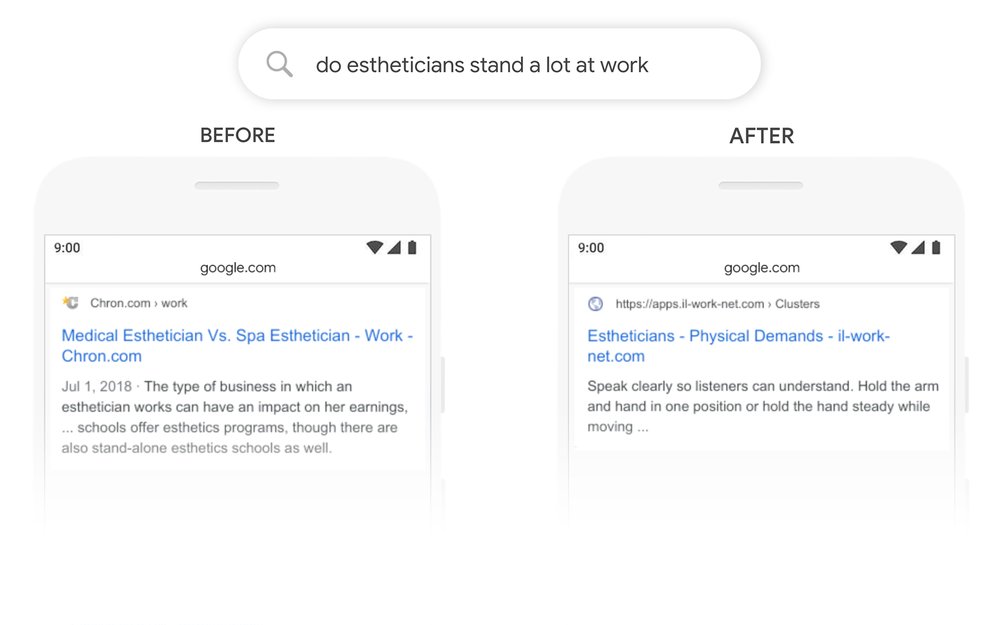

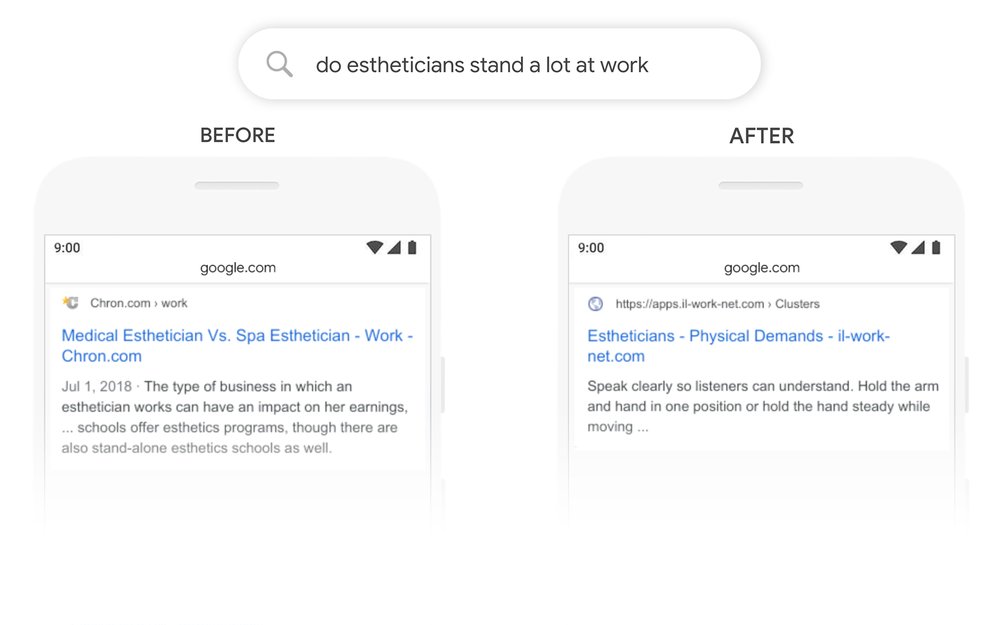

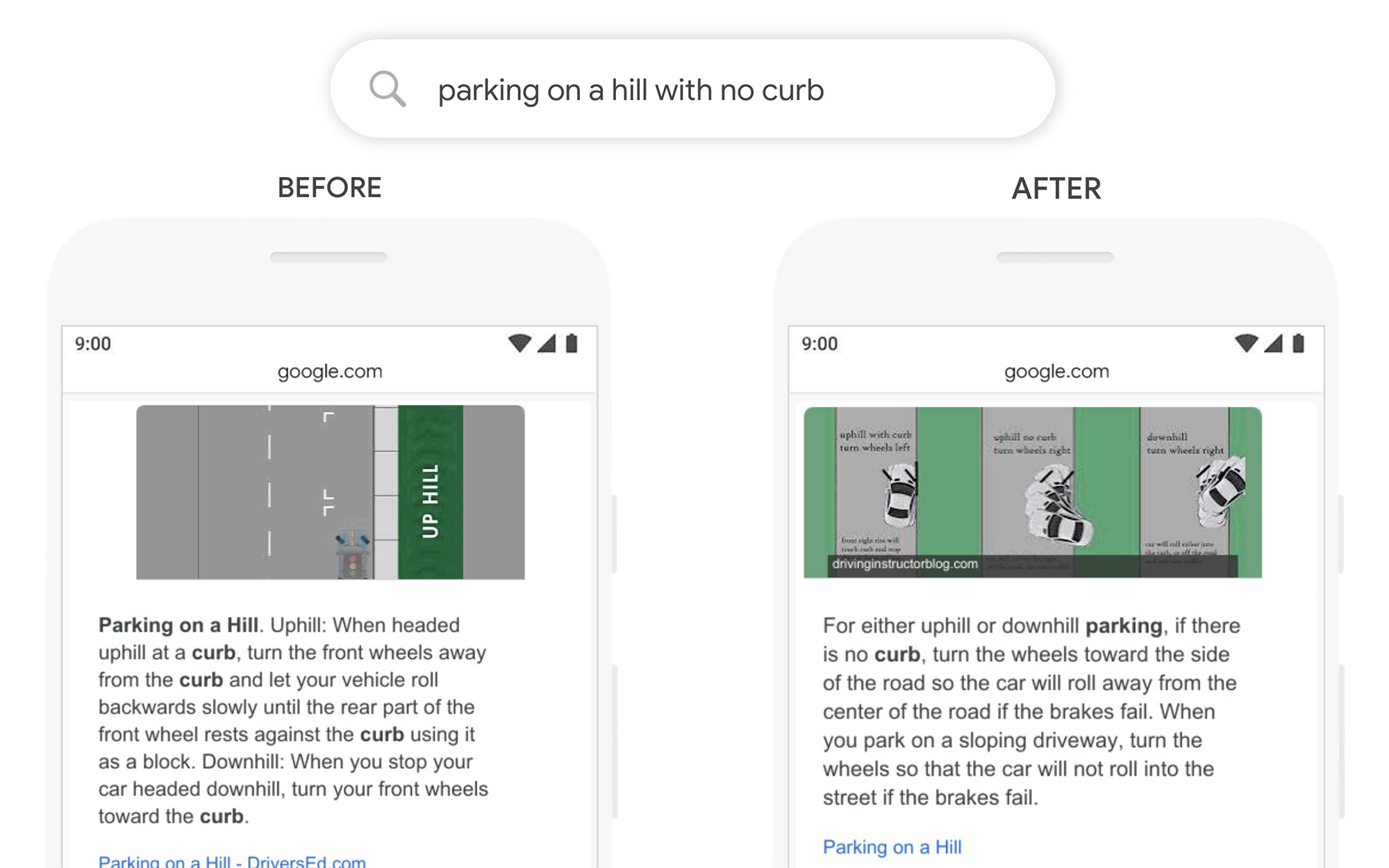

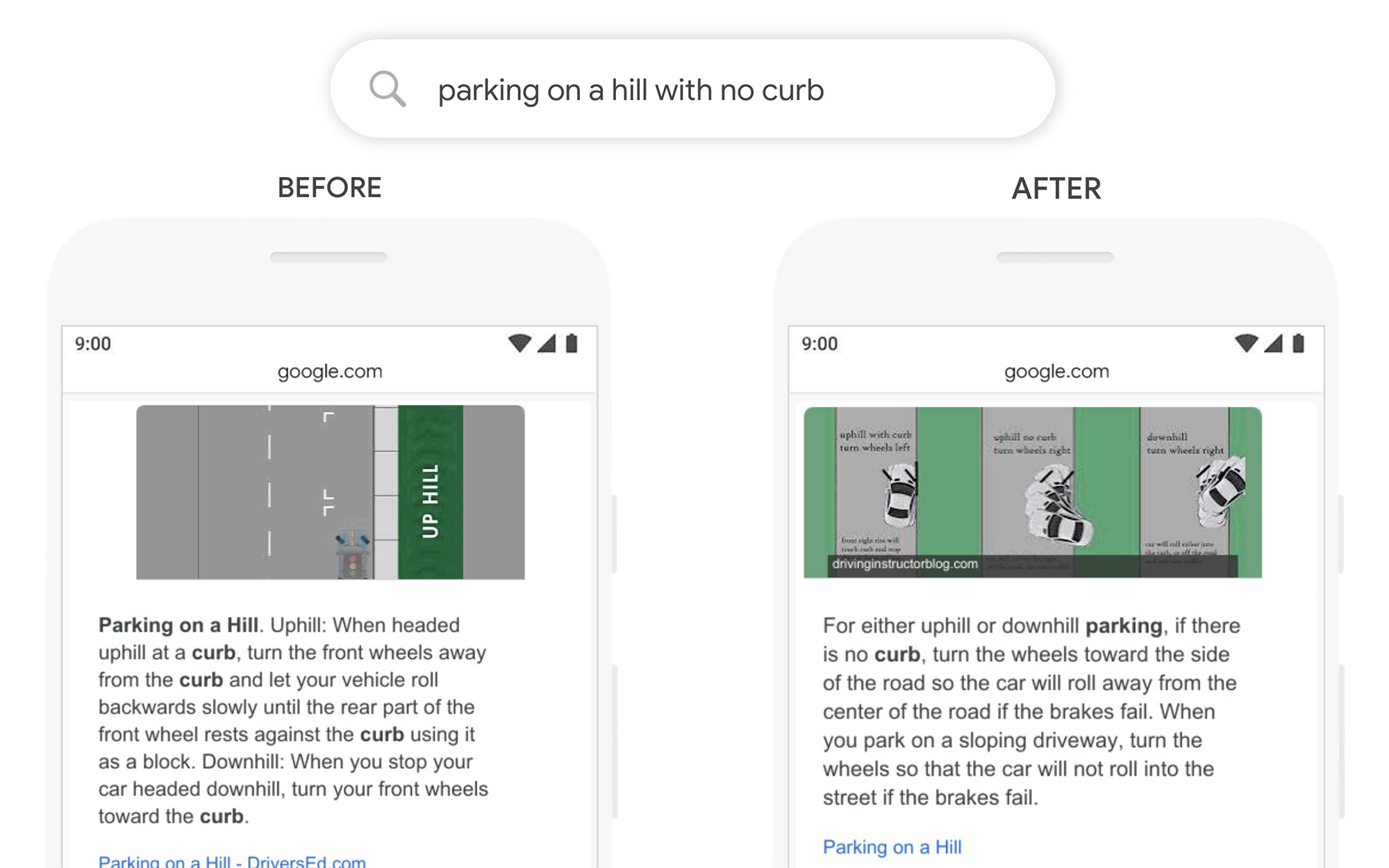

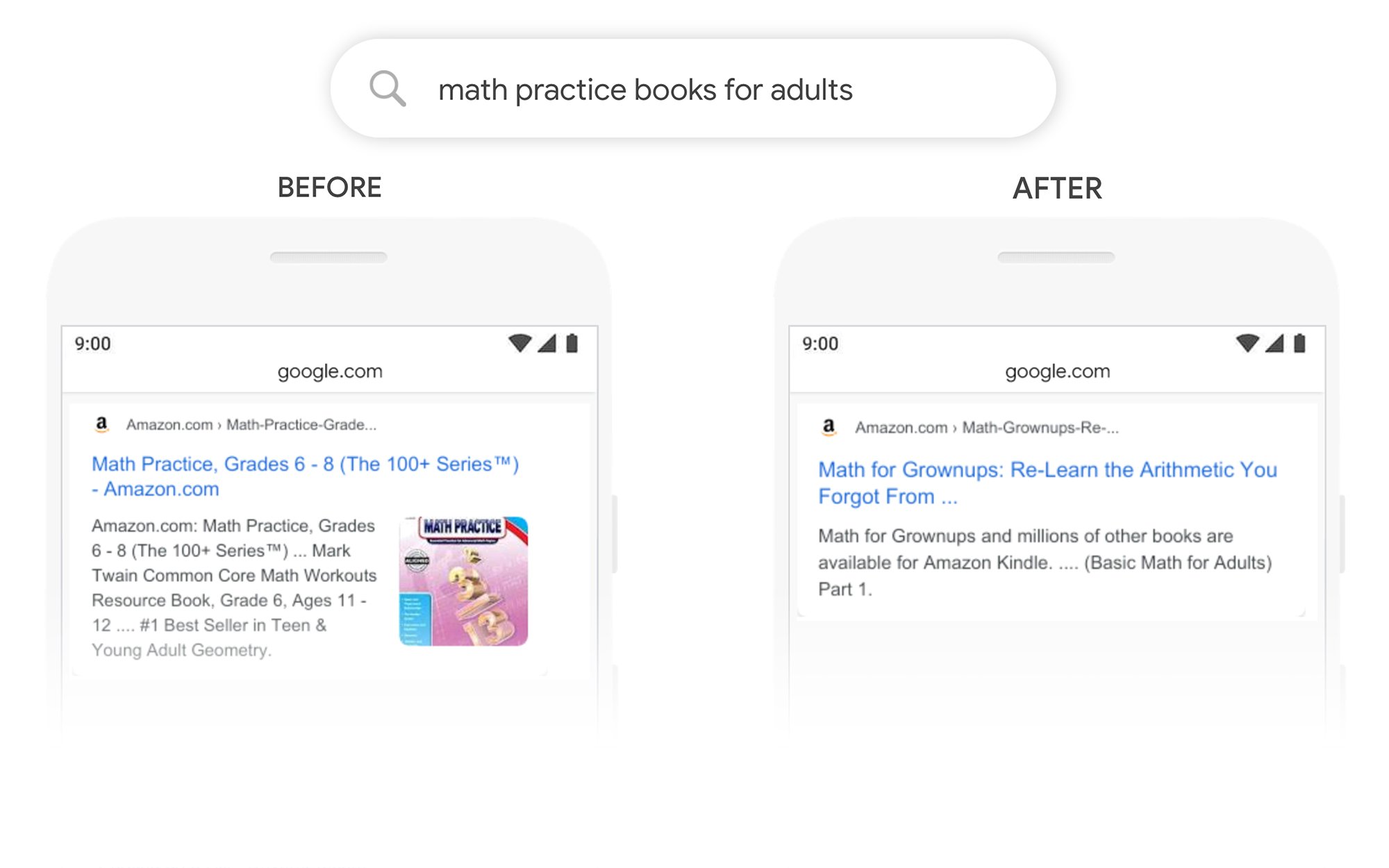

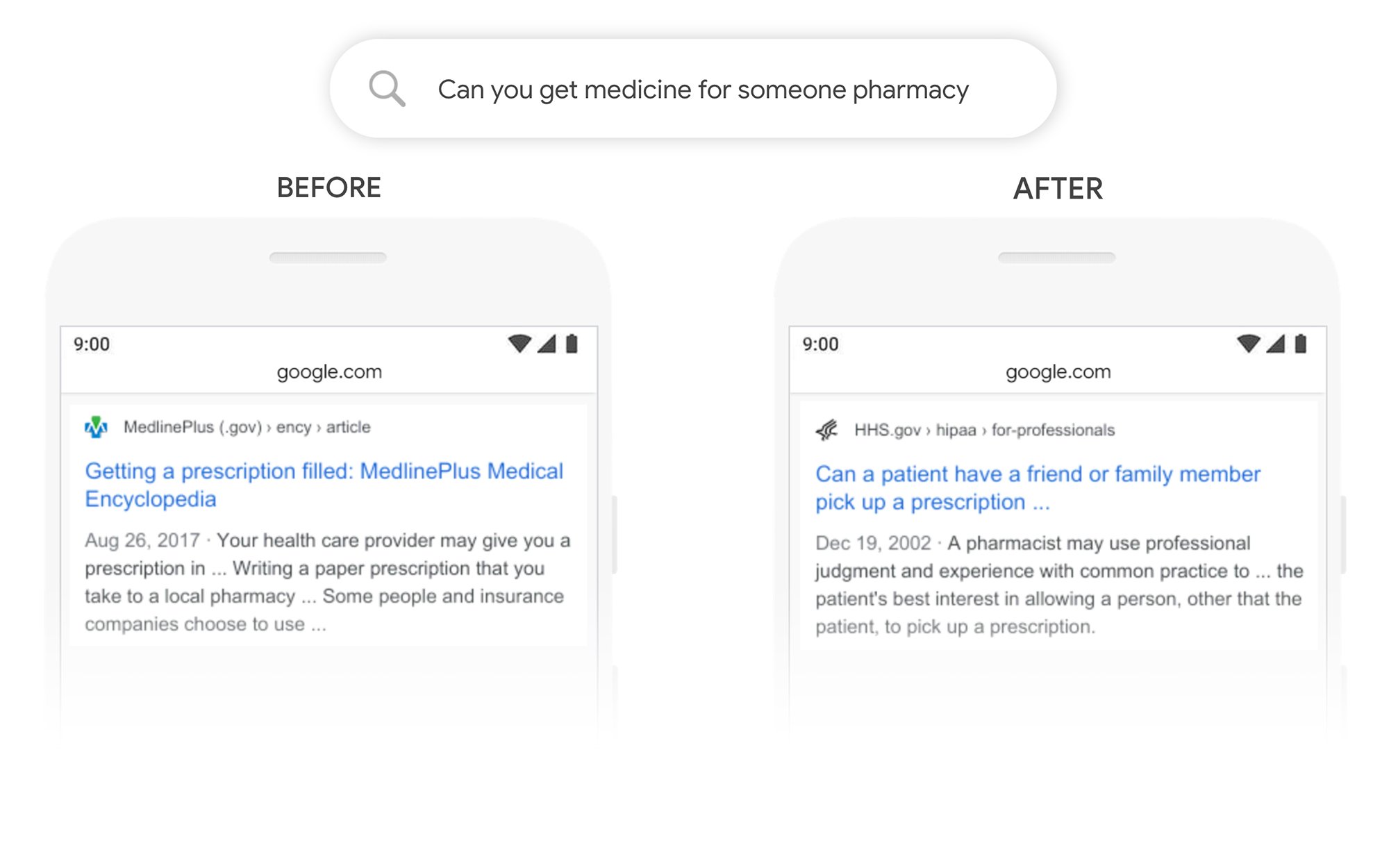

Here are some examples from Google:

Three years ago, I began working on the Rank Candidate Theory, which basically asserts that Google has been doing exactly this kind of processing for a while now, and how it relates to how Google ranks content.

The AI that we ended up building is designed to look at content Google is favoring in search with the goal of understanding WHY. As Google results are getting smarter, the INK editor is able to take advantage of these refinements and will better understand what BERT is looking for.

INK is free for all to download and use. Our latest update, released today, will help you to check how your existing content is affected by Google BERT.